|

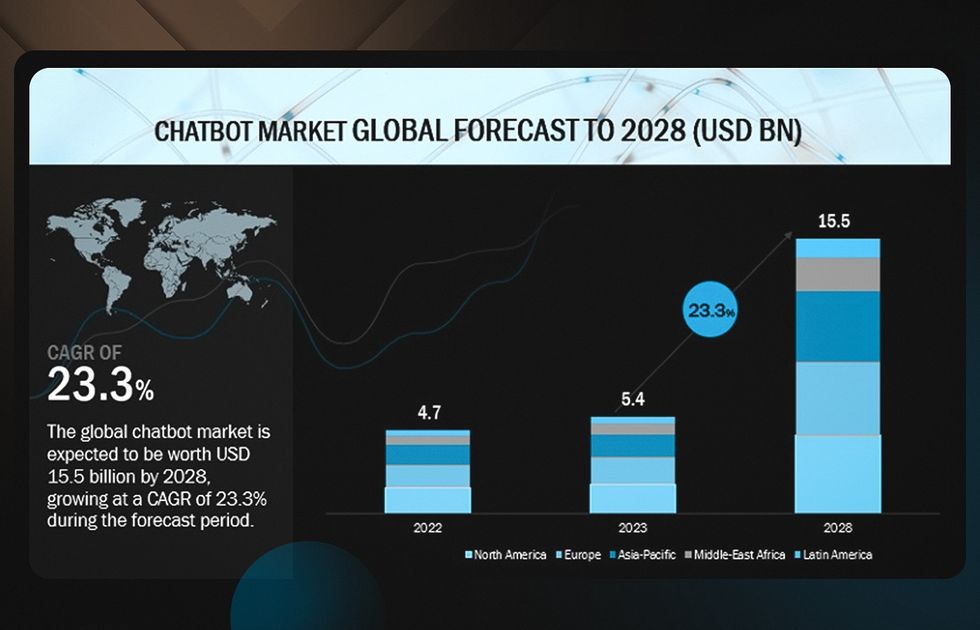

But this was not the case for Koko, a company providing an online emotional support chat service. The third WHO principle, ensuring transparency, asks those employing AI-powered health-care services, to be honest about their use of AI. (Shutterstock) From simulated empathy to sexual advances Research has found that such conversational chatbots can help manage feelings of depression and anxiety. Thus far, while AI has the potential to identify at-risk individuals, it cannot safely resolve life-threatening situations without the help of human professionals. Wysa, another AI-enabled therapy platform, goes a step further and specifies that the technology is not designed to handle crises such as abuse or suicide, and is not equipped to offer clinical or medical advice. On their websites, both Woebot and Youper, state that their applications are not meant to replace traditional therapy and should be used alongside mental health-care professionals. Today’s leading AI-powered mental health applications market themselves as supplementary to services provided by human therapists. With their first and second principles - protecting autonomy and promoting human safety - the WHO emphasizes that AI should never be the sole provider of health care. The World Health Organization (WHO) has developed six key principles for the ethical use of AI in health care. They can also help those experiencing mental health symptoms outside of their therapist’s session hours, and those wary of stigma around seeking therapy. For example, an automated chatbot can tide over the long wait time to receive mental health care from professionals. These applications can be beneficial to people who may need immediate help with their symptoms. CAI chatbots are most effective at implementing psychotherapy approaches such as cognitive behavioral therapy (CBT) in a structured, concrete and skill-based way.ĬBT is well known for its reliance on psychoeducation to enlighten patients about their mental health issues and how to deal with them through specific tools and strategies. Research has found that such conversational agents can effectively reduce the depression symptoms and anxiety of young adults and those with a history of substance abuse. (Shutterstock) Should I fire my therapist? Psychotherapists have been adapting AI for mental health since the 1960s, and now, conversational AI has become much more advanced and ubiquitous, with the chatbot market forecast to reach US$1.25 billion by 2025.īut there are dangers associated with relying too heavily on the simulated empathy of AI chatbots.Īutomated chatbots can be beneficial to people who may need immediate help, but they are not meant to replace traditional therapy. It was launched in 2017 by psychologist and technologist Alison Darcy. Woebot is an app that offers one such chatbot. CAI is a technology that communicates with humans by tapping into “ large volumes of data, machine learning, and natural language processing to help imitate human interactions.” You select “Get help with a problem.”Īn automated chatbot that draws on conversational artificial intelligence (CAI) is on the other end of this text conversation.

The app replies by prompting you to choose one of three predetermined answers.

You feel your face overheating as your thoughts start to race along: “they’re going to think I’m a horrible employee,” “my boss never liked me,” “I’m going to get fired.” You reach into your pocket and open an app and send a message.

Imagine being stuck in traffic while running late to an important meeting at work.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed